Alibaba Loses AI Prodigy, OpenAI Preempts DeepSeek in Congress, & GPT-5.4 Launches Into a Boycott

Alibaba bleeds AI talent, DeepSeek faces theft allegations, and OpenAI's best launch gets overshadowed

Qwen’s Builder Walks Away from Alibaba

Lin Junyang, 32 years old, Alibaba’s youngest P10 executive, posted on X at midnight Beijing time on March 4: “me stepping down. bye my beloved qwen.”

Lin built Qwen from an obscure side project into the most downloaded open-source LLM series in the world. Over 700 million downloads on Hugging Face. Nearly 400 open-sourced models. Over 180,000 community fine-tuned derivatives. He did this in under three years.

He wasn’t alone. Three senior Qwen executives departed in 2026: Hui Binyuan (head of Qwen Code, left for Meta in January), Yu Bowen (post-training research, resigned March 3), and Kaixin Li (core contributor to Qwen 3.5 and Coder). Alibaba’s stock dropped 5.3% in Hong Kong, its biggest intraday loss since October.

Alibaba was restructuring the Qwen team, breaking Lin’s vertically integrated unit into horizontal functional teams. They hired Zhou Hao from Google DeepMind (who worked on Gemini 3.0) to lead post-training RL, reporting directly to the CTO rather than to Lin.

Alibaba spent 3 billion RMB on Chinese New Year promotions for the Qwen consumer app. Monthly active users surged from 31 million to 203 million in a single month. All that compute serving consumer chat sessions is compute not training the next frontier model. Lin wanted to push the frontier. Alibaba needed to monetize the existing one.

It didn’t help that Lin had publicly said in January that “China has less than a 20% chance of winning the AI race” and called that figure “already highly optimistic.” That kind of candor plays well internationally. It plays less well inside a Chinese tech conglomerate that just spent 3 billion RMB telling consumers its AI is world-class.

Lin hasn’t announced a next move, but is almost certainly staying in China. US export controls, visa restrictions, and the political climate all cut against a cross-Pacific move. The more likely paths: he starts his own company (he’s 32, has the credibility, and China’s VC ecosystem is hungry for AI founders), or he joins ByteDance, Moonshot AI, or one of the well-funded startups challenging Qwen’s lead.

This is Alibaba’s third major AI talent exodus in four years. At some point this stops being about individual departures and starts being about whether Alibaba can retain the kind of people who build frontier AI.

OpenAI & Anthropic Go to Congress Before DeepSeek Ships New Model

DeepSeek V4 was supposed to ship in the first half of 2025. It’s now March 2026 and the model still hasn’t dropped.

TechNode reported on March 2 that V4 was planned “this week.” The Financial Times confirmed a first-week-of-March target. That window has come and gone. The timing was supposed to be strategic: launch alongside China’s “Two Sessions” parliamentary meetings, position DeepSeek as a national AI champion at the highest-visibility political moment of the year. Instead, the Two Sessions are underway and V4 is still unreleased.

The delay is landing in a hostile environment that didn’t exist a year ago. On February 12, OpenAI sent a memo to the U.S. House Select Committee on China claiming to have observed “ongoing attempts by DeepSeek to distill frontier models of OpenAI and other US frontier labs, including through new, obfuscated methods.” Twelve days later, Anthropic piled on, accusing three Chinese AI companies of “industrial-scale” distillation campaigns: over 16 million exchanges with Claude generated from roughly 24,000 fraudulently created accounts. Bloomberg called the whole effort a “self-defeating bid to preempt DeepSeek.”

OpenAI brought this to Congress right before V4’s expected launch window, and Anthropic followed days later. The effect, intentional or not, is that V4 now launches into a narrative where its performance will be treated as evidence of theft rather than engineering. If V4 benchmarks land suspiciously close to GPT-5 or Claude on certain tasks, the distillation allegations get louder. If they don’t, the model underperforms.

Meanwhile, U.S. officials say V4 was trained on smuggled Nvidia Blackwell chips at a data center in Inner Mongolia, because training on Huawei hardware hit performance ceilings. Nvidia called the reports “far-fetched” but declined to comment further. DeepSeek optimized V4’s inference for Huawei Ascend chips and denied Nvidia and AMD pre-release access, but the training itself apparently required American silicon. Export control violations plus distillation allegations are a lot of legal surface area for a company about to release the most scrutinized open-weight model in history.

The specs, based on leaked details and published research papers, are still substantial. A trillion-parameter mixture-of-experts model with roughly 32 billion active parameters per token. Native multimodal support for text, images, video, and audio. A 1-million-token context window, up 8x from V3’s 128K. Expected Apache 2.0 license. Estimated pricing around $0.14 per million input tokens, versus $3.00 for GPT-5 and $5.00 for Claude Opus.

DeepSeek and Qwen captured 15% combined global AI market share by January 2026, up from 1% a year prior. OpenAI’s share fell from 55% to 40% over the same period. The longer V4 stays unreleased, the more oxygen the distillation allegations get. And the more the narrative shifts from “can this model compete?” to “did this model cheat?” That may be exactly the point.

OpenAI Ships GPT-5.4 In Their Worst Week

OpenAI launched GPT-5.4 on March 5, and many developers who used 90% Claude a month ago are now going 50/50. This is the first OpenAI model where planning and coding feel Opus-level. Matt Shumer called it “the best model in the world, by far.”

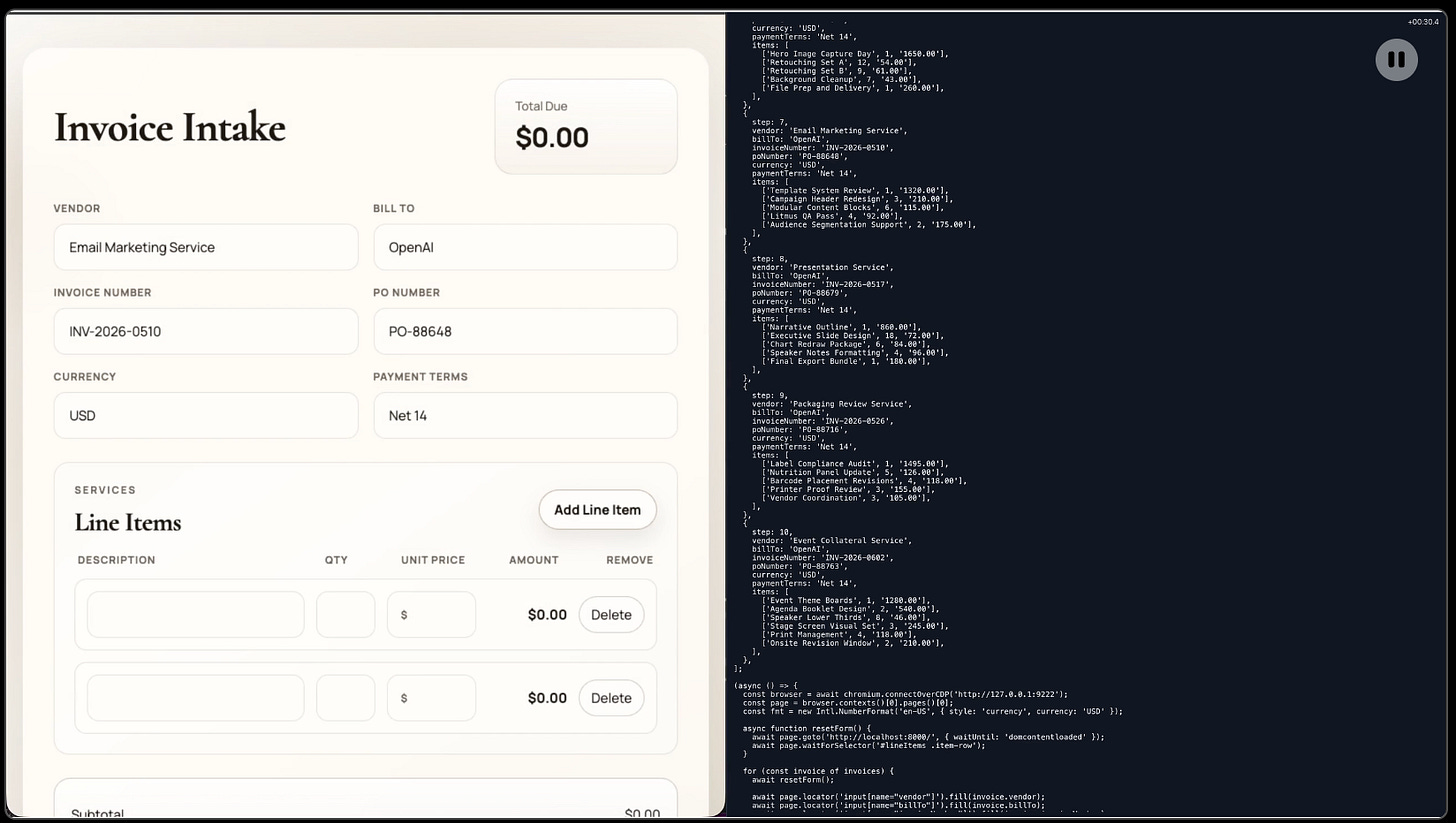

GPT-5.4 can look at your screen, move your mouse, and type on your keyboard to complete multi-step workflows across applications. It is the first general-purpose AI model to beat humans on the OSWorld desktop navigation benchmark. This is what makes AI agents real, software that can actually use other software the way you do.

They’re focusing on finance, launching ChatGPT for Excel and Google Sheets. Reusable “Skills” handle earnings previews, comparables analysis, DCF modeling, and investment memo drafting. Data integrations with Moody’s, Dow Jones Factiva, MSCI, and Third Bridge bring market data into the same workflow. OpenAI worked with finance practitioners to optimize GPT-5.4 specifically for the kind of work that junior analysts spend 80-hour weeks doing: three-statement models, scenario analysis, data extraction.

When Anthropic launched the legal plugin three weeks ago, we wrote that Anthropic was moving from model supplier to workflow owner. GPT-5.4’s finance push is OpenAI’s version of the same play. Both labs are betting more on vertical tools instead of just general chat.

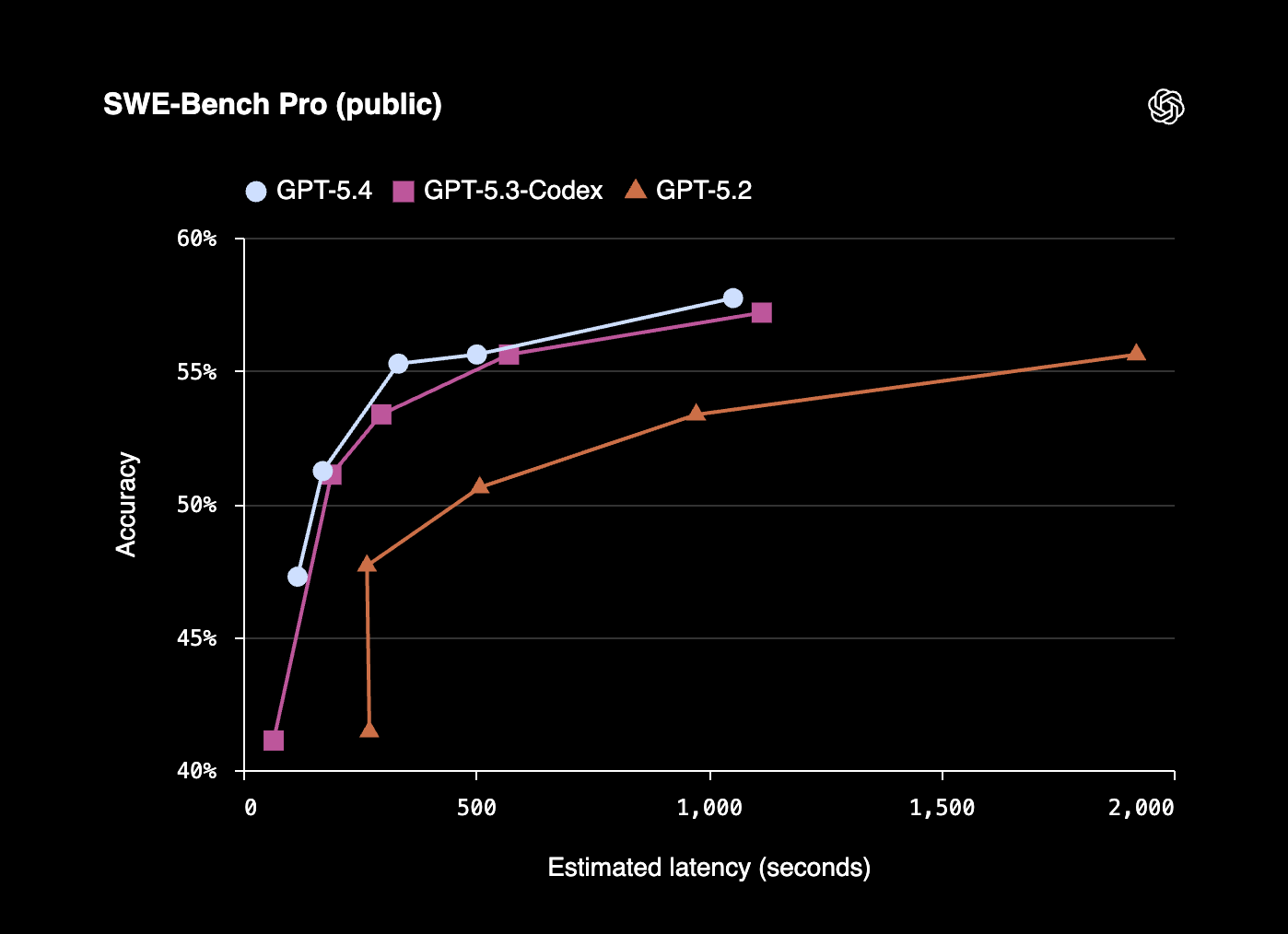

Claude still leads on SWE-Bench, Claude Opus 4.6 scores 80.8% versus GPT-5.4’s 77.2%. For complex multi-file engineering, the consensus from developers is that Claude remains the stronger specialist. But GPT-5.4 is the better generalist across knowledge work, professional documents, and now desktop automation.

Yet OpenAI dropped all of this into its worst brand crisis in years. The QuitGPT boycott hit 2.5 million users cancelling or pledging to leave after OpenAI’s Pentagon deal. Protesters showed up outside OpenAI headquarters. Claude surged to #1 on the Apple App Store.

GPT-5.4 is the best product OpenAI has ever shipped, but it might also be the least noticed.

it’s been really interesting to follow these stories. particularly the ongoing distillation attacks and deepseek models.

A lot happening in AI this week, the DeepSeek and GPT-5.4 storylines are worth watching closely.