Anthropic Is Google Cloud's Growth Engine, Runs to SpaceX for Compute, & Skips the Consultants

Google Cloud's growth matches Anthropic's growth, Musk monetizes excess Colossus 1 capacity with Anthropic, and the labs are vertically integrating before the consultancies do

Google Cloud Is Now an Anthropic Play

On Tuesday, Anthropic committed to spending $200 billion with Google Cloud over five years. The deal covers multiple gigawatts of TPU capacity, with units coming online starting in 2027.

That number is bigger than Google Cloud’s entire 2025 revenue. So the more interesting question is going the other way: how much of Google Cloud’s growth is already Anthropic’s growth?

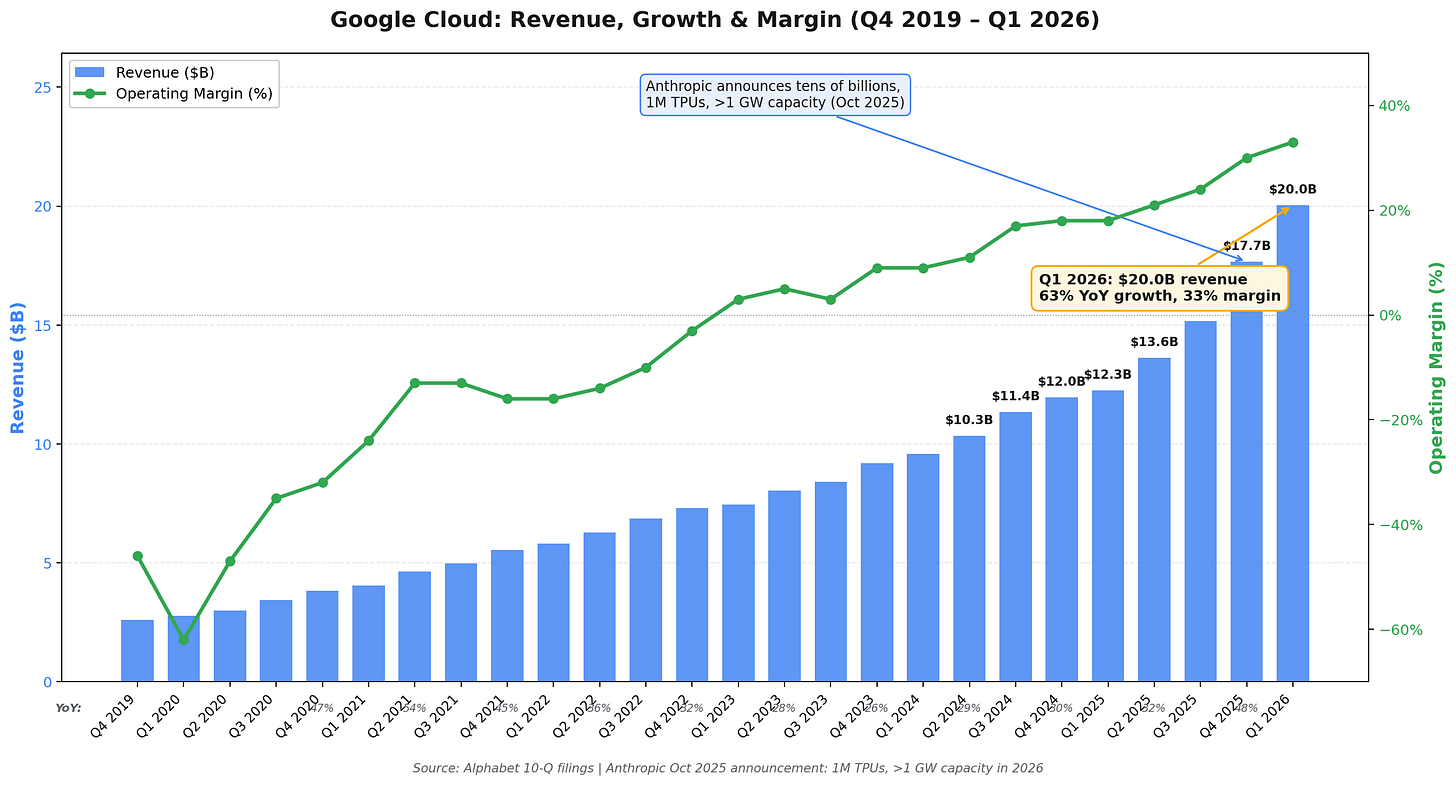

Look at the past 5 quarters.

Q1 2025: 28% growth

Q2: 32%

Q3: 34%

Q4: 48%

Q1 2026: 63%

Hyperscalers usually decelerate as they scale because the law of large numbers catches up, so what’s the catalyst?

In October 2025, Anthropic announced it would expand its use of Google Cloud to up to 1 million TPUs, and “well over a gigawatt of capacity online in 2026.” That announcement is the inflection on the chart.

On Q1 2026 earnings, Sundar said:

Google Cloud is differentiated because we are the only provider to offer first-party solutions across the entire Enterprise AI stack... Our Enterprise AI solutions have become our primary growth driver for Cloud for the first time. In Q1, revenue from products built on our gen AI models grew nearly 800% year over year.

And the relationship goes beyond cloud revenue. Google owned 14% of Anthropic as of March 2025. Anthropic is rumored to be raising at a $900 billion valuation, which would make Google’s stake worth $126 billion.

So Google is monetizing Anthropic three ways at once: cloud revenue (rented compute), enterprise AI revenue (inference passthrough), and equity gains (mark-to-market on the stake). Anthropic’s $200 billion commit feeds all three.

If you’re holding Alphabet stock, congrats, you also own Anthropic. If you’re a Google Cloud sales lead pitching against AWS or Azure, your biggest customer reference is a competitor of theirs. But if Anthropic stumbles, three Alphabet line items can move in the other direction.

A quick note from me.

I built AI Pulse, which tracks how AI is changing your career, and how much more your role pays with AI experience.

I track 22,000+ jobs weekly across 42 roles and 14 industries to answer: what % of your field now requires AI skills, how much more those jobs pay (avg 32% premium), and what to learn.

Forward it to anyone who wants to stay ahead of the curve, or subscribe free →

Anthropic Gets Compute from SpaceX, While Claude Keeps Slipping

On Tuesday, Anthropic took over the excess capacity at Colossus 1, the 300+ MW Memphis data center with 220,000+ GPUs that used to be xAI’s flagship. Anthropic doubled Claude Code’s five-hour rate limits the same day.

Colossus 2 is SpaceXAI’s flagship now. If you recall, Musk merged xAI into SpaceX in February as SpaceXAI in a deal valued at $1.25 trillion, then partnered with Cursor on April 21 for $10 billion in committed work plus a $60 billion option to acquire by year-end. Cursor is still ramping inside SpaceXAI’s stack, which leaves Colossus 1 with capacity that would otherwise sit idle.

In other words, this is a solid win-win. Anthropic needs compute and SpaceX has excess that they can monetize. We last wrote that Anthropic was throttling Claude consumers to feed enterprise compute. Doubling Claude Code limits the same day as the deal is the most direct admission Anthropic could make that the throttle was about supply.

But the product is still slipping. On April 24, Anthropic published a post-mortem confirming three separate changes degraded Claude Code:

Reasoning effort dropped from high to medium on March 4 to fix UI latency. It dropped intelligence on complex tasks. This frustrates Claude Code users, because they manually toggle on compute levels using the /model command.

A caching bug shipped on March 26 wiped reasoning history every turn instead of after one hour of inactivity.

System prompt limits on April 16 capped tool-call text at 25 words and final responses at 100. Coding evals dropped 3% on Opus 4.7.

The complaints that forced the post-mortem started with a GitHub issue from Stella Laurenzo, a Senior Director in AMD’s AI group. She analyzed 6,852 Claude Code sessions, 17,871 thinking blocks, and 234,760 tool calls and concluded the model could not be trusted for complex engineering work.

For Opus 4.7 specifically, Reddit and X consensus is that real-world cost is 1.5x to 3x higher than 4.6, multi-file edits are worse, refusal rates are up, and the conversational warmth that earlier Claude models had is gone. Part of me wonders if the conversational warmth is gone because users have become more frustrated and antagonistic, I know I have.

Anthropic’s own migration guide confirms Opus 4.7 follows instructions “much more literally” than 4.6, which is the polite version of the complaints.

Personally, I started bifurcating my work between Claude Code and Codex. Last weekend, Codex one-shotted a refactor with GPT-5.5 that Opus 4.7 on max compute couldn’t get right after three attempts. Anthony Maio’s Substack post “Codex Got Better Because Claude Code Got Weird” makes the same case from the other direction.

Colossus 1 buys Anthropic time on the supply side, but doesn’t fix the engineering culture that shipped three product-degrading changes in seven weeks. One of the big lynchpins justifying their valuation is switching costs, and if enough users are switching, that could cause alarms for their future IPO.

Anthropic & OpenAI Skip the Consultants in the Same Week

In the same week, Anthropic and OpenAI both launched dedicated enterprise services joint ventures.

Anthropic’s JV is a $1.5 billion vehicle with Blackstone, Hellman & Friedman, and Goldman Sachs as founding partners. Blackstone and H&F each put in $300 million, Goldman put in $150 million, and Apollo, General Atlantic, Leonard Green, GIC, and Sequoia rounded out the cap table.

OpenAI’s version, called The Deployment Company, raised $4 billion at a $10 billion pre-money valuation.

Four days after Anthropic’s announcement, the JV shipped a suite of pre-built AI agents for the world’s largest banks, using Claude Opus 4.7 tuned for financial work, full Microsoft 365 integration, and a Moody’s data partnership.

Frontier labs spent two years pretending models alone would sell, but the Fortune 500 wants pre-built agents wired into their data, their compliance stack, their workflow, their identity provider, and their procurement contract. That layer was typically managed by Accenture, Deloitte, BCG, and the AI consultancies that raised at insane valuations on the integrator thesis.

But Anthropic and OpenAI just walked into that lane using Goldman + Blackstone for distribution. The integrators planned on capturing the implementation margin on every enterprise deal, but labs are vertically integrating before that happens.

You can see the consequences of this playing out in Accenture’s stock price.

And we know why, AI consultancies are losing their most important pitch: “we’re the neutral integrator across model providers.” Anthropic’s JV is not neutral. It picks Claude. OpenAI’s is not neutral. It picks GPT.

Second, Goldman, Blackstone, and Apollo are distribution. Goldman talks to every CFO in America. Blackstone owns 250+ portfolio companies. Apollo has 30 million workers across its portfolio.

Third, this is why the $200 billion Google Cloud commit had to happen. You can’t sell pre-built agents to JPMorgan, Goldman, and a thousand mid-market banks without compute that scales 100x from here. The capex story and the go-to-market story go hand in hand.

The labs are competing with the consultancies now, not just each other.

The Signal

3 takes that didn’t fit above, plus one bet.